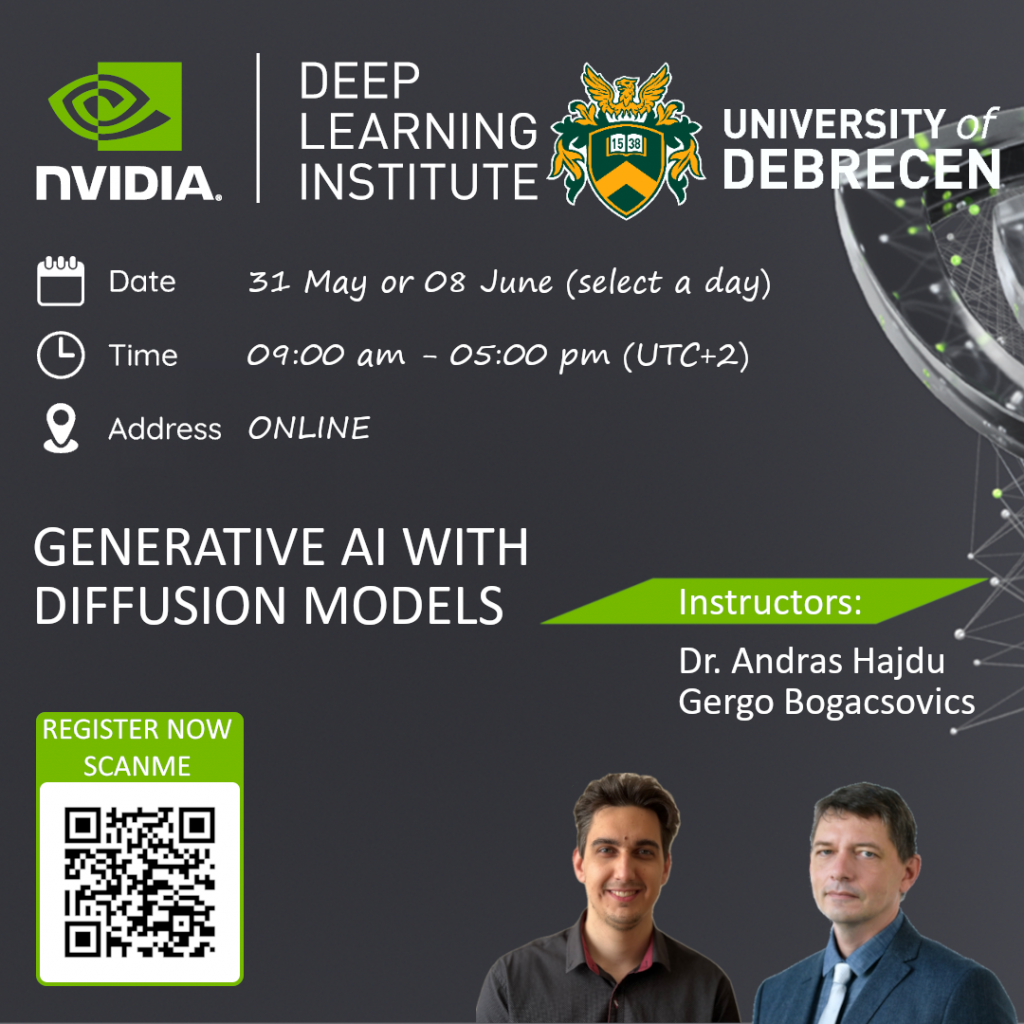

Official instructor-led NVIDIA DLI workshop

Title

Nvidia DLI - Generative AI with Diffusion Models

Lecturer

Prof. Dr. Andras Hajdu, hajdu.andras@inf.unideb.hu

Gergo Bogacsovics, bogacsovics.gergo@inf.unideb.hu

Content and organization

Thanks to improvements in computing power and scientific theory, generative AI is more accessible than ever before. Generative AI plays a significant role across industries due to its numerous applications, such as creative content generation, data augmentation, simulation and planning, anomaly detection, drug discovery, personalized recommendations, and more. In this course, learners will take a deeper dive into denoising diffusion models, which are a popular choice for text-to-image pipelines.

Learning Objectives

- Build a U-Net to generate images from pure noise

- Improve the quality of generated images with the denoising diffusion process

- Control the image output with context embeddings

- Generate images from English text prompts using the Contrastive Language—Image Pretraining (CLIP) neural network

Topics Covered

- U-Nets

- Diffusion

- CLIP

- Text-to-image Models

Course Outline

From U-Net to Diffusion

- Build a U-Net architecture.

- Train a model to remove noise from an image.

Diffusion Models

- Define the forward diffusion function.

- Update the U-Net architecture to accommodate a timestep.

- Define a reverse diffusion function.

Optimizations

- Implement Group Normalization.

- Implement GELU.

- Implement Rearrange Pooling.

- Implement Sinusoidal Position Embeddings.

Classifier-Free Diffusion Guidance

- Add categorical embeddings to a U-Net.

- Train a model with a Bernoulli mask.

CLIP

- Learn how to use CLIP Encodings.

- Use CLIP to create a text-to-image neural network.

Level

Intermediate

Course Duration

8

Course Type

Short Course

Participation terms

Free of charge for university students and staff. Course Prerequisites: - A basic understanding of Deep Learning Concepts. - Familiarity with a Deep Learning framework such as TensorFlow, PyTorch, or Keras. This course uses PyTorch. Beyond enrolling on the AIDA website please register also here: https://forms.office.com/e/eJAQEpg3iY

Lecture Plan

Presentations + hands-on training: From U-Net to Diffusion (120 min), Diffusion Models (120 min), Optimizations (90 min), Classifier-Free Diffusion Guidance (90 min), CLIP (60 min)

Schedule

May 31 or June 8 2024, 9:00–17:00 CET (UTC+2)

Language

English

Modality (online/in person):

online

Notes

Upon successful completion of the assessment, the participant will receive an Nvidia Certificate of Competency.

Back to List

Back to List