Artificial Intelligence for video streaming platforms

Lecturer

Prof. Ruxandra-Georgiana TAPU, ruxandra_tapu@comm.pub.ro

Content and organization

Recent advances in telecommunications, collaborated with the development of image and video processing and acquisition devices has led to a spectacular growth of the amount of the visual content data (still images, video streams, 2D graphical elements, 3D models…) stored, transmitted and exchanged over Internet. Within this context, elaborating efficient tools to access, browse and retrieve video content has become a crucial challenge. Existing approaches, currently deployed in industrial applications are based mostly on textual indexation, which shows quickly its limitations, related to the intrinsic poly-semantic nature of the multimedia content and to the various linguistic difficulties that need to be overcome.

The textual annotation has as main objective to associate a set of keywords to each individual item. In the case of huge repositories of audio-visual content, like those currently existing over Internet such a procedure requires a tremendous effort of human, manual annotation. In addition, the indexation process implies various annotators which may have different perceptions and sensibilities, which leads to subjective interpretations of the content. Finally, the multi-lingual aspects cannot be treated in a straightforward manner. This shows the interest of developing content-based indexing and retrieval systems, able to interpret and describe the semantics of the multimedia content in an automatic manner. When considering the specific issue of video indexing, the description exploited by the actual commercial search engines is monolithic and global, treating each document as a whole. Such an approach does not consider neither the informational and semantic richness, specific to video documents, nor their intrinsic spatio-temporal structure.

The presentation will be focused on various systems developed for on-line video streaming platforms as:

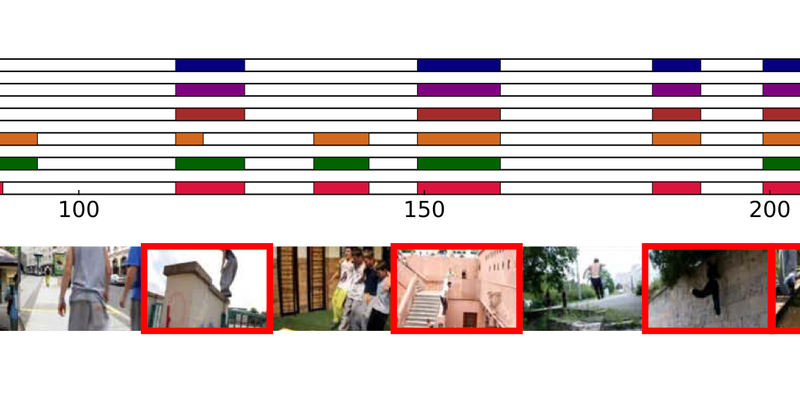

1. DEEP-AD: A Multimodal Temporal Video Segmentation Framework for Online Video Advertising:

– Shot boundary detection

– Automatic video abstraction

– Multimodal scene segmentation using: low/high level visual descriptors, audio patterns and semantic description

– Thumbnail selection from video scenes

– Ads insertion based on semantic criterion

2. Automatic subtitle synchronization and positioning:

– Text pre-processing and automatic speech recognition

– Anchor word identification and token matching

– Phrases alignment

– Subtitle/Close Caption positioning

3. DEEP-HEAR: A Multimodal Subtitle Positioning System Dedicated to Deaf and Hearing-Impaired People:

– Face detection, tracking and recognition

– Video temporal segmentation into stories

– Active speaker detection

– Subtitle positioning

Course Duration

4 hours

Course Type

Short Course

Participation terms

REGISTRATION: Free of charge

Both AIDA and non-AIDA students are encouraged to participate in this short course.

If you are an AIDA Student* already, please:

Step (a): Register in the course, please send fill the Registration form.

AND

Step (b): Enroll in the same course in the AIDA system using the button below, so that this course enters your AIDA Certificate of Course Attendance.

If you are not an AIDA Student, do only step (a).

*AIDA Students should have been registered in the AIDA system already (they are PhD students or PostDocs that belong only to the AIDA Members listed in this page: Members)

Schedule

16-17 June 2022 from 11:00 to 13:00 CET (4 hours)

Language

English

Modality (online/in person):

Online

Back to List

Back to List