Title

An Introduction to Trustworthy Machine Learning

Lecturer

Sina Sajadmanesh, sajadmanesh@idiap.ch

Ali Shahin Shamsabadi, a.shahinshamsabadi@turing.ac.uk

Daniel Gatica-Perez, gatica@idiap.ch

Content and organization

This course covers two privacy-related problems: (i) differentially private machine learning, and (ii) adversarial examples for privacy protection.

In the privacy module, we first give an introduction to the importance of privacy and personal data from a human-centered perspective. In the next lectures of this module, we will cover differential privacy and differentially private machine learning and introduce relevant libraries and frameworks for differentially private programming.

In the second part of the course, we first review the literature on designing adversarial examples. Then, we will describe how to exploit the vulnerability of deep neural networks to protect the content of images/audio through adversarial examples.

The course will offer theoretical explanations followed by examples using software developed by the presenters and distributed as open source. Attendees are expected to be familiar with basic concepts in machine learning, probability, and statistics. For the practical part of the tutorial, attendees will benefit from the knowledge of Python.

Below is the syllabus of the course

- Introduction and motivation (privacy and personal data)

- Differential privacy

- Definitions

- Differentially private machine learning

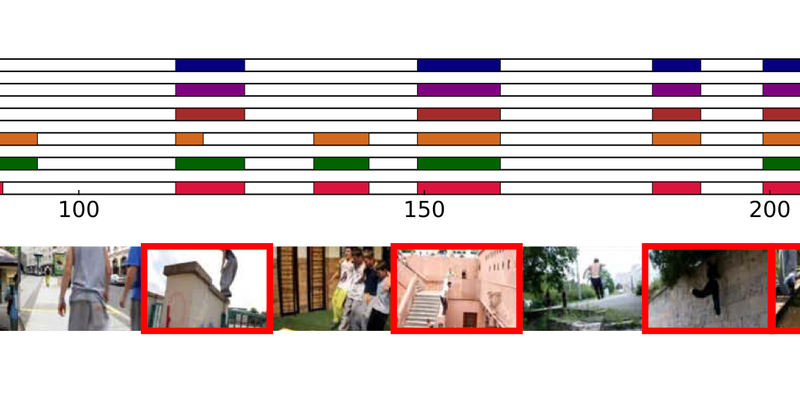

- Adversarial examples

- Adversarial goals, knowledge, and properties

- Defenses against adversarial examples

- Norm-bounded and content-based adversarial examples

- Adversarial examples for privacy protection

- Privacy and utility for images/audio in social multimedia

- Hands-on examples (with software modules distributed to the participants)

Level

Advanced (Master's and PhD)

Course Duration

6 hours (4 lectures, approximately 1.5 hour each, including short breaks)

Course Type

Short Course

Participation terms

Both AIDA and non-AIDA students are encouraged to participate in this short course. To register, please send an email to sajadmanesh@idiap.ch, and you will be provided with the link to the course.

If you are an AIDA Student* already, please also enroll in the same course in the AIDA system (button at the end of the page), in order for this course to be included on your AIDA Course Attendance Certificate.

*AIDA Students should have been registered in the AIDA system already (they are PhD students or PostDocs that belong only to the AIDA Members list (https://www.i-aida.org/about/members/).

Schedule

23-24 November 2022, 10:00 -- 13:00 CET

Modality (online/in person):

Online via Zoom

Back to List

Back to List